You can view analytics about scores, response frequencies, question quality, and distractor quality for auto-graded multiple choice questions. After booklets have been matched to students and the assessment has been graded you can view the analytics by navigating to the Results page of your assessment and clicking the Multiple choice analytics button.

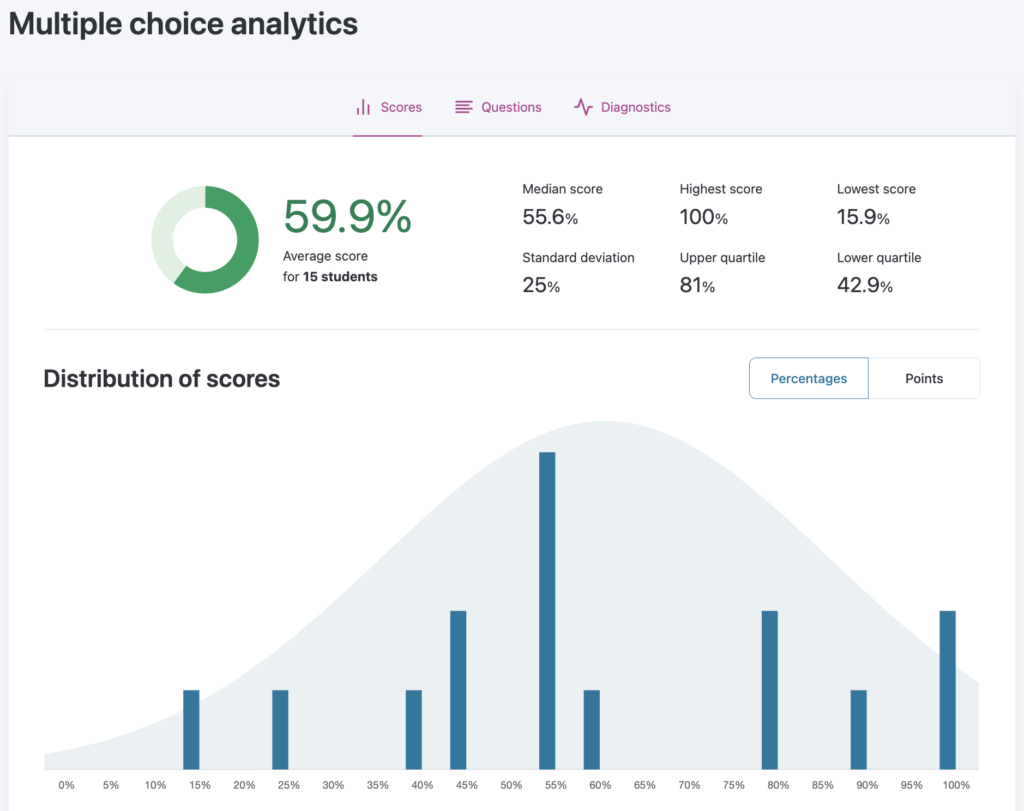

Scores

The Scores page displays statistics for the total scores on the multiple choice component of your assessment. You can also download the data as CSV files to analyze question performance and student responses.

- Number of students: The total number of students matched to a graded assessment.

- Average score: Mean total score

- Median score: Middle score when the total scores are sorted from lowest to highest

- Lower quartile: Middle score of the lower half of scores when sorted from lowest to highest

- Upper quartile: Middle score of the upper half of scores when sorted from lowest to highest

- Highest score: Highest total score

- Lowest score: Lowest total score

- Standard deviation: Measures the amount of variation in the total scores

where

where nis the number of students,xiis the total score for studentiandxis the average total score on the test

These summary statistics are mode-independent: they summarize whatever scores the chosen scoring mode produces.

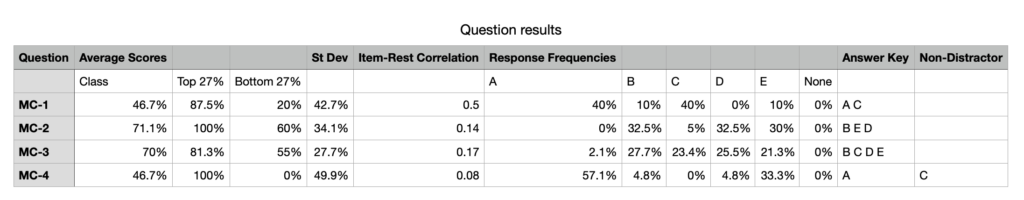

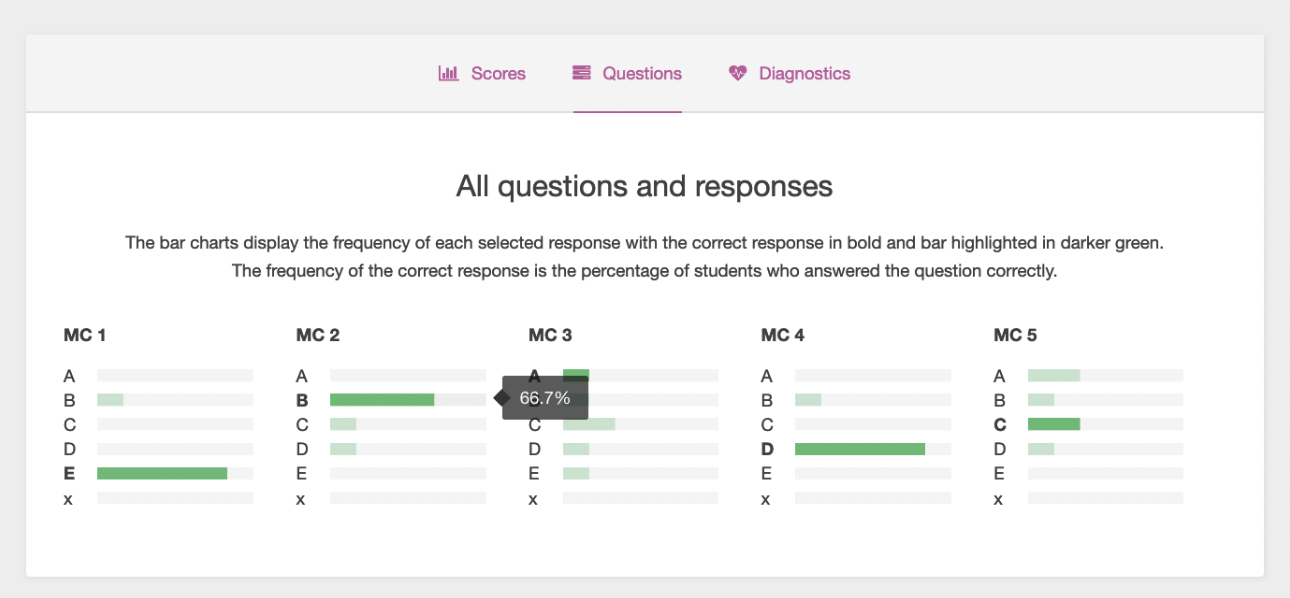

Question data export

From the Scores page, you can download a CSV file of data for each question. The following statistics are available in the export:

- Average scores: Mean item scores expressed as a percentage of full credit for the given question. For Either/or questions this is the percentage of students who answered the question correctly. For Partial or Exact questions it reflects the mean proportion of available points earned.

- Class: Mean item score (as a percentage) across all students — same as “Average score” above.

- Top 27%: Mean item score (as a percentage) for students in the top 27% of total scores.

- Bottom 27%: Mean item score (as a percentage) for students in the bottom 27% of total scores.

- Standard Deviation: Measures the amount of variation in the question score.

- Item-Rest Correlation: Measures how well the question discriminates between high and low scoring students. See the Discrimination section for the full formula. A question with an item-total correlation of less than 0.2 should be rejected or improved by revision.

- Response Frequencies: Percent of students who selected each response (A, B, C, D, E, or x for no response).

- Answer Key: Correct response or responses to the question.

- Non-Distractors: Response(s) to the question not selected by any students.

Student data export

From the Scores page, you can download a CSV file of data for each student. The following statistics are available in the export:

- Scores: Score on each multiple choice question

- Total Score: Total score on all multiple choice questions. Total score has a minimum value of 0. If the sum of the scores produces a negative value, Crowdmark will record it as 0.

- Responses: Selected responses for each question (A, B, C, D, E, or x for no response).

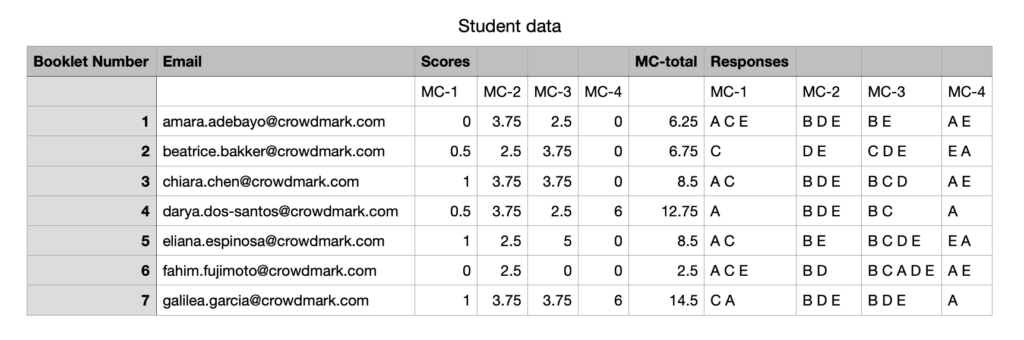

Questions

The Questions page shows a bar chart of how often each option was chosen for each multiple choice question. For bubble sheets, students that left the question unanswered appear in the last bar labeled “x”. You can hover over a bar to see the percentage of students who chose that option. Correct answers are shown in bold, and their bars are highlighted in dark green.

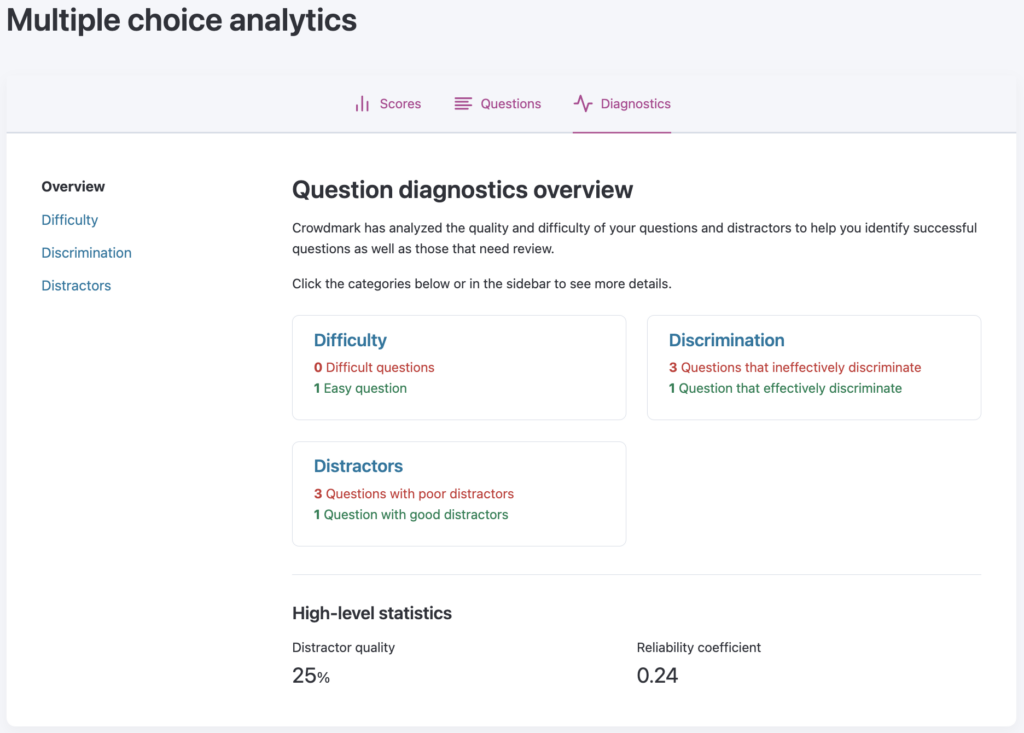

Diagnostics

The Diagnostics page summarizes your multiple choice questions by difficulty, discrimination, and distractors. Often referred to as item analysis, these diagnostics give you insight into your individual multiple choice questions as well as some statistics to help you determine the quality of your multiple choice questions. Crowdmark highlights your most difficult and easiest questions, questions that are most and least effective at discriminating between your best and worst performing students and questions that include functional and nonfunctional distractors.

Difficulty: The difficulty of a question is determined by computing the mean item score expressed as a percentage of full credit for that question.

-

For questions that have a single correct response, or Either/or multiple response questions this equals the percentage of students who answered correctly.

-

For Partial questions this equals the mean proportion of the question’s points earned, averaged across all students.

-

For Exact questions this equals the percentage of students who selected the exact correct set of answers.

Crowdmark classifies questions as easy when the mean item score exceeds 70% of full credit and difficult when the mean item score is below 30% of full credit.

Discrimination: The discrimination of a question refers to the correlation between student scores on a particular question and total scores on the test.

Crowdmark uses the item-rest correlation — also called the corrected item-total correlation — to calculate the discrimination of each question. This is a Pearson correlation coefficient between the item score and the rest score (total score minus the item score). Excluding the item from the total avoids part-whole inflation and produces a corrected estimate. The formula is:

![]()

where Xi is the vector of scores earned by each student on the question, and T-Xi is the vector of each student’s total multiple choice scores minus their score on the question..

A positive item-total correlation for a question indicates that students who scored well overall tended to score well on that question. Generally:

-

rᵢₜ > 0.40 — very good discriminator

-

0.20 ≤ rᵢₜ ≤ 0.40 — acceptable discriminator

-

rᵢₜ < 0.20 — should be rejected or improved by revision

Item-total correlations can also be calculated for each incorrect response (distractor). A question should have a negative item-total correlation for each of its distractors, indicating that incorrect responses were selected by the weaker students.

Distractors: Distractors are the incorrect responses to a question.

Crowdmark classifies questions as poor distractors poor when an incorrect response to the question was selected by less than 5% of students or the incorrect response was selected by top performing students.

Crowdmark classifies questions as having good distractors when all incorrect responses are chosen by at least 5% of the students and incorrect responses are selected by weak students.

High-level statistics

Distractor quality: Crowdmark measures the distractor quality of the overall test by calculating the percentage of good distractors on the test. A good distractor has a response frequency of at least 5% and a negative item-total correlation (i.e., selected more often by lower-performing students).

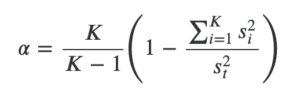

Reliability coefficient: Measures whether the test as a whole discriminates between higher and lower performing students. Crowdmark uses Cronbach’s Alpha (α) to calculate the test reliability: where K is the number of questions, sᵢ² is the population variance of scores on question i, and sₜ² is the population variance of total test scores.

where K is the number of questions, sᵢ² is the population variance of scores on question i, and sₜ² is the population variance of total test scores.

Cronbach’s Alpha typically ranges between 0 and 1, though small sample sizes can produce values outside this range. The closer to 1, the more reliable the exam — meaning its questions consistently discriminate between higher and lower performing students.

The interpretation of Alpha depends on the purpose of the test:

- Tests intended to distinguish between students who have mastered the material and those who have not should have α ≥ 0.5. A result of α < 0.3 is considered poor for such tests.

- If the purpose of the test is to ensure that all students have mastered essential concepts (mastery testing), then Alpha should be close to 0.

Sources:

-

- Cronbach’s Alpha on Wikipedia

- Cronbach, L. J. (1951). Coefficient alpha and the internal structure of tests. Psychometrika, 16(3), 297–334.

- Distractor Efficiency in an Item Pool for a Statistics Classroom Exam: Assessing Its Relation With Item Cognitive Level Classified According to Bloom’s Taxonomy

- Lord, Frederic M., and Melvin R. Novick. Statistical Theories of Mental Test Scores. Addison-Wesley, 1968.

- Item-Score Reliability as a Selection Tool in Test Construction

- Item-Score Reliability in Empirical-Data Sets and Its Relationship With Other Item Indices

- Pearson Correlation Coefficient on Wikipedia

where

where